Safe deployments of Versioned workflows

At Uber, we manage billions of workflows with lifetimes ranging from seconds to years. Over the course of their lifetime, workflow code logic often requires changes. To prevent non-deterministic errors that changes may cause, Cadence offers a Versioning feature. However, the feature's usage is limited because changes are only backward-compatible, but not forward-compatible. This makes potential rollbacks or workflow execution rescheduling unsafe.

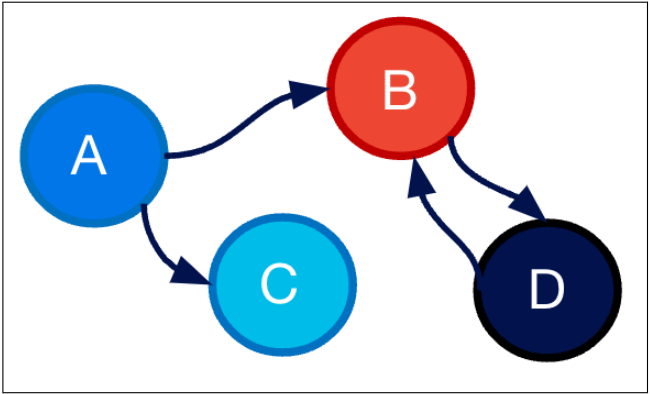

To address these issues, we have made recent enhancements to the Versioning API, enabling the safe deployment of versioned workflows by separating code changes from the activation of new logic.